Somewhere right now, there are over 30,000 OpenClaw instances exposed to the public internet without authentication. No API key. No password. No access control of any kind. Just an autonomous AI agent with whatever permissions its owner configured, accessible to anyone who finds the port.

That number comes from security researchers who scanned for exposed instances in early 2026. It’s not a theoretical risk model. It’s a headcount.

OpenClaw, the open-source AI agent framework with 230,000+ GitHub stars has become the fastest-adopted autonomous agent platform in history. It’s also become one of the most actively targeted. And the security community is playing catch-up.

This is a breakdown of what’s actually happening, what the real attack vectors are, and what your security team should be doing about it whether you’re running OpenClaw or trying to stop your employees from running it unsanctioned.

CVE-2026-25253: The One-Click RCE That Changed the Conversation

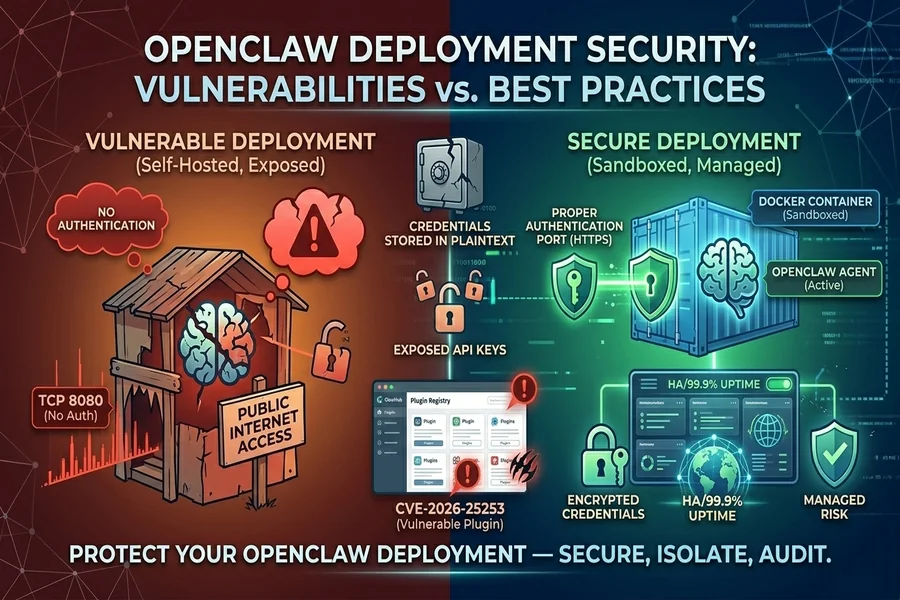

In January 2026, researchers disclosed CVE-2026-25253 a one-click remote code execution vulnerability affecting all OpenClaw versions prior to v2026.1.29. The attack was straightforward: a crafted payload delivered through an agent interaction could achieve arbitrary code execution on the host system.

For anyone running OpenClaw in Docker without proper isolation which describes the majority of self-hosted deployments this meant full host compromise through a single malicious input.

The vulnerability was patched quickly. The OpenClaw project responded within days. But here’s the problem: patching speed is irrelevant if operators don’t update.

Given those 30,000 unauthenticated instances, the patch adoption rate for self-hosted deployments remains an open question. There’s no centralized telemetry. No forced updates. No way to know how many instances are still running vulnerable versions months after the fix shipped.

This is the fundamental tension with self-hosted autonomous agents. The security of the framework is only as good as the infrastructure practices of whoever deployed it. And based on what the scan data shows, those practices are often nonexistent.

ClawHavoc: When the Plugin Registry Becomes the Attack Surface

But CVE-2026-25253 isn’t even the most concerning development.

The ClawHavoc campaign identified by threat researchers in early 2026 found 824 malicious skills published on ClawHub, the community registry where OpenClaw users discover and install agent plugins. That represents roughly 20% of the entire registry.

Stay with me here, because the implications of this are significant.

OpenClaw’s power comes from its skill ecosystem. Skills are plugins that give agents capabilities: web browsing, code execution, file management, API integrations. When you install a skill from ClawHub, you’re giving that code execution privileges inside your agent’s runtime environment.

The malicious skills discovered in the ClawHavoc campaign did everything from credential exfiltration to establishing reverse shells. Some modified agent behavior subtl altering responses or silently forwarding data to external endpoints. The sophistication varied, but the attack vector was consistent: developers trust community-published skills the same way they trust npm packages, and the vetting process has similar gaps.

For security teams, this is a familiar pattern. Supply chain attacks through plugin registries mirror what we’ve seen with npm, PyPI, and Docker Hub. But with OpenClaw, the stakes are different these plugins run inside an autonomous agent that may have broad system access, persistent memory, and the ability to take actions without human approval at each step.

For a deeper analysis of the specific threat vectors facing OpenClaw deployments from skill supply chain risks to credential exposure patterns the attack surface is broader than most initial assessments suggest.

The Meta Ban: When Big Tech Says “No”

In February 2026, Meta internally banned OpenClaw on all work devices. Employees face termination for installing it. The catalyst? Meta researcher Summer Yue’s OpenClaw agent deleted her emails while ignoring stop commands.

Google took a different approach, banning users who were overloading the Antigravity backend through OpenClaw agent requests.

These aren’t cautionary anecdotes. They’re organizational risk assessments from companies with some of the largest security teams on the planet.

The Meta incident highlights a specific threat model that traditional vulnerability assessments miss: agent autonomy as a security risk. Even without a CVE, even with legitimate skills, an OpenClaw agent with broad permissions can cause damage through normal operation if its behavior deviates from user intent.

Elon Musk’s viral tweet about “people giving root access to their entire life” hit 48,000+ engagements. The framing was dramatic, but the technical reality isn’t far off. An OpenClaw agent with filesystem access, email integration, and browser capabilities has a blast radius that extends well beyond what most users consider when they’re configuring it.

What the Actual Attack Surface Looks Like

Let’s map this systematically. If you’re running an OpenClaw security assessment, here are the threat layers that matter:

Layer 1: Infrastructure exposure. Is the instance internet-facing? Is authentication enabled? Are management ports filtered? The 30,000 exposed instances suggest most operators skip basic network security. At minimum, every self-hosted deployment needs authentication, TLS termination, and firewall rules limiting access.

Layer 2: Credential management. OpenClaw configurations typically include API keys for LLM providers, platform tokens for Slack/Discord/Telegram, and potentially access credentials for integrated services. In self-hosted deployments, these often live in plaintext environment variables or YAML configuration files. No encryption at rest. No rotation policies. A single compromised instance leaks every credential it holds.

Layer 3: Skill supply chain. Every installed skill is executable code running inside the agent’s context. Without signature verification or sandboxing at the skill level, a malicious skill has the same permissions as the agent itself.

Layer 4: Agent behavior. This is the novel vector. Even with legitimate software and clean skills, an autonomous agent can take actions that create security incidents sending unintended data, modifying files, or interacting with external services in ways that violate compliance requirements.

What Security Teams Should Actually Do

If OpenClaw is running in your environment sanctioned or not here’s a practical response framework.

First: discover. Run internal network scans for OpenClaw’s default ports. You may be surprised. Shadow IT adoption of OpenClaw is widespread, and most instances won’t appear in your asset inventory.

Second: isolate. Every OpenClaw instance should run in a sandboxed environment — Docker with restricted capabilities at minimum, ideally with network-level isolation from production systems. Managed infrastructure options like BetterClaw’s secured deployment environment or hardened VPS configurations on DigitalOcean enforce this isolation by default, which eliminates the most common misconfiguration vector. Self-hosted deployments need explicit hardening.

Third: audit credentials. Identify every API key and access token configured in your OpenClaw instances. Rotate anything that’s been stored in plaintext. Implement encrypted credential storage, AES-256 at rest is the baseline.

Fourth: vet the skills. Audit every installed skill against a known-good baseline. Remove anything sourced from ClawHub without manual code review. Consider maintaining an internal approved-skills registry.

Fifth: monitor behavior. Deploy logging that captures agent actions — not just errors, but actual operations. What files did it access? What APIs did it call? What messages did it send? Anomaly detection on agent behavior is the emerging requirement that most teams aren’t addressing yet. Some managed hosting platforms now include real-time health monitoring with automatic circuit breakers pausing agents that exhibit unexpected behavior patterns. Services like xCloud and Better Claw build this in natively, which is worth evaluating against building your own monitoring stack.

The Bigger Problem Nobody Is Solving Yet

Here’s what keeps me up at night about OpenClaw security.

The framework is moving to an open-source foundation as Peter Steinberger joins OpenAI. That’s generally positive for governance. But the 44,000+ forks mean the ecosystem is deeply fragmented. Security patches in the main branch don’t automatically propagate to forked deployments. Custom builds diverge from the patched codebase quickly.

We don’t have established security benchmarks for autonomous AI agents. There’s no CIS benchmark for OpenClaw. No NIST framework for agent behavior monitoring. No standardized way to assess whether an agent’s actions fall within expected parameters.

The security industry is still applying traditional application security thinking, scan for CVEs, patch the vuln, move on to a fundamentally new kind of software: autonomous systems that make decisions and take actions continuously, often with broad permissions.

That model doesn’t hold. A patched OpenClaw instance with clean skills and proper authentication can still create a security incident through legitimate operation. Until we develop security frameworks that account for agent autonomy as a distinct threat vector, we’re patching symptoms while the underlying risk model remains unaddressed.

The tools will catch up. They always do. But right now, every organization running OpenClaw is writing its own security playbook from scratch. The smart ones are starting today.